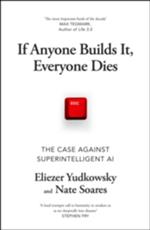

The founder of the field of AI risk explains why superintelligent AI is a global suicide bomb and we must halt development immediately AI is the greatest threat to our existence that we have ever faced. The scramble to create superhuman AI has put us on the path to extinction – but it’s not too late to change course. Two pioneering researchers in the field, Eliezer Yudkowsy and Nate Soares, explain why artificial superintelligence would be a global suicide bomb and call for an immediate halt to its development. The technology may be complex, but the facts are simple- companies and countries are in a race to build machines that will be smarter than any person, and the world is devastatingly unprepared for what will come next. How could a machine superintelligence wipe out our entire species? Will it want to? Will it want anything at all? In this urgent book, Yudkowsky and Soares explore the theory and the evidence, present one possible extinction scenario, and explain what it would take for humanity to survive. The world is racing to build something truly new and if anyone builds it, everyone dies.

If anyone builds it, everyone dies

ISBN: 9781847928924

Format: Hardback

Publisher: The Bodley Head Ltd

Origin: GB

Release Date: September, 2025

151644